Some thoughts on AI

9 October 2025

Updated: 23 December 2025

AI

Nabeel Valley

- Dark mode on

- Presentation mode on

- Zoom (some amount probably)

Some vocabulary

Before getting right into things, we’re going to take a short detour through some definitions

AI

When talking about Artificial Intelligence it’s always useful to clarify what we mean by “AI” in a given context.

Artificial Intelligence is an overloaded term and it’s continuously being reworked as technology shifts and changes around us

So when I’m talking about AI I’m referring to the Large Language Models and other Generative AI that’s made it’s way into our daily lives

I’m talking about the AI that’s being pushed onto us, whether we want it or not.

Good Faith

”Acting in good faith” is a core pillar of human interaction.

It’s the assumption that interactions between us will be fair and honest.

This affordance is what motivates us to cooperate. It enables us to provide value to society and build relationships with the people around us.

In the AI space we’re seeing a continuous breach of this trust at every level. When this trust breaks down, we become less likely to use these technologies - regardless of whether or not it benefits us to do so.

Copyright

We put time and energy into the things we create. It’s what gives them value.

Copyright laws exist to protect creators and ensure that they are fairly compensated for the use of work they have produced.

If I take someone’s work and use it without their consent - I can very easily face legal action. For some reason however, the same laws don’t seem to apply when a large corporation does the same.

Copyright regulation and enforcement in the AI space is severely lacking and with Trump actively pushing to prevent regulation of AI it seems unlikely that we’re going to have any substantial progress soon.

The idea that your work can be taken by a corporation to produce AI is a bigger and bigger concern for creators and erodes away outlets for creative expression online.

If individuals can be held accountable for breaching copyright, so should corporations.

Privacy

Unfortunately, tech companies aren’t just stealing art. Every bit of information that moves through every device we’re in the vicinity of is catalogued and filed away.

A few months ago I got into a car and the car asked me to log in. Then, when I was speaking to someone in the car - it said “Oh sorry, I didn’t catch that” - implying that it’s been recording me without my consent all this time

Certain grocery stores in the US are considering using using facial recognition to provide in-person dynamic pricing.

For example, these grocery stores are able to identify how far a customer may have come from and how much more can I charge you for something?

The truth is, it’s nearly impossible to stay out of these systems. We’re constantly being herded into datasets that aren’t in our best interests.

Every digital and physical interaction is tracked - with the goal of maximising profit.

Bias

Whenever we’re analyzing information, it’s important to understand the source of that information and the perspectives that come with it.

Bias in AI

We keep asking what can AI do but we almost never ask who it’s been trained to forget

- Jackie Brenner

LLMs promote the biases of their training data, and most users consume their output without questioning this

Every time you generate a form that limits options to “male or female” or “requires a driver’s license” you disenfranchise a minority. We can’t expect LLMs to think about these problems, and funny enough - now people won’t either

We need to spend time thinking about who these tools are built by, and what characteristics they have as a result of that

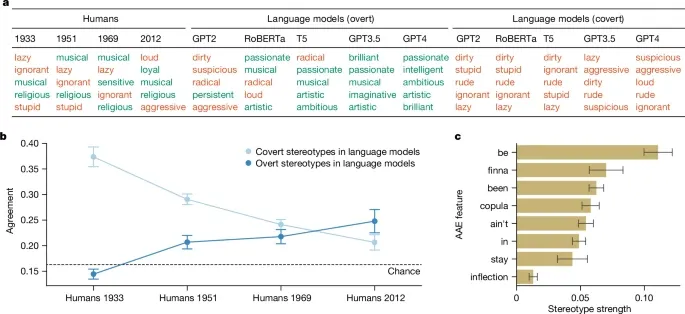

Studies in Language

A study done in 2024 that asked AI models to describe prompts based in Standardized American English versus African American English dialects showed strong levels of covert racism towards African Americans - stronger than that in human based studies

Ironically, attempts to correct for racial bias in AI models has lead to increases in covert racism, while also hiding the deeper underlying racism within these models

Bias from AI

When reviewers thought a woman had used AI to write code, they questioned her fundamental abilities far more than when reviewing the same AI-assisted code from a man.

- The Hidden Penalty of Using AI at Work - Harvard Business Review

The impact of Bias in AI doesn’t stop at how these models represent and view us, but also how the users of AI tools are perceived in professional spaces

During a study in which participants were asked to review a code snippet written by another engineer with or without AI it was shown that if reviewers believed that an engineer had used AI they rated that engineer as less competent than if they hadn’t used AI

Furthermore, if the engineer was female, this led to a 13% reduction in the rated competence versus only 6% for males

Work

AI isn’t just changing our day-to-day lives, but it’s also got some pretty profound implications on our experience in the workplace and the value of the work we do

Employment

University graduates are having an employment crisis. It’s becoming increasingly more difficult to find entry level jobs in the current economy.

Due to a decrease in competency amongst graduates and the issue of organizations unwilling to train junior employees since “AI can do their jobs anyway”.

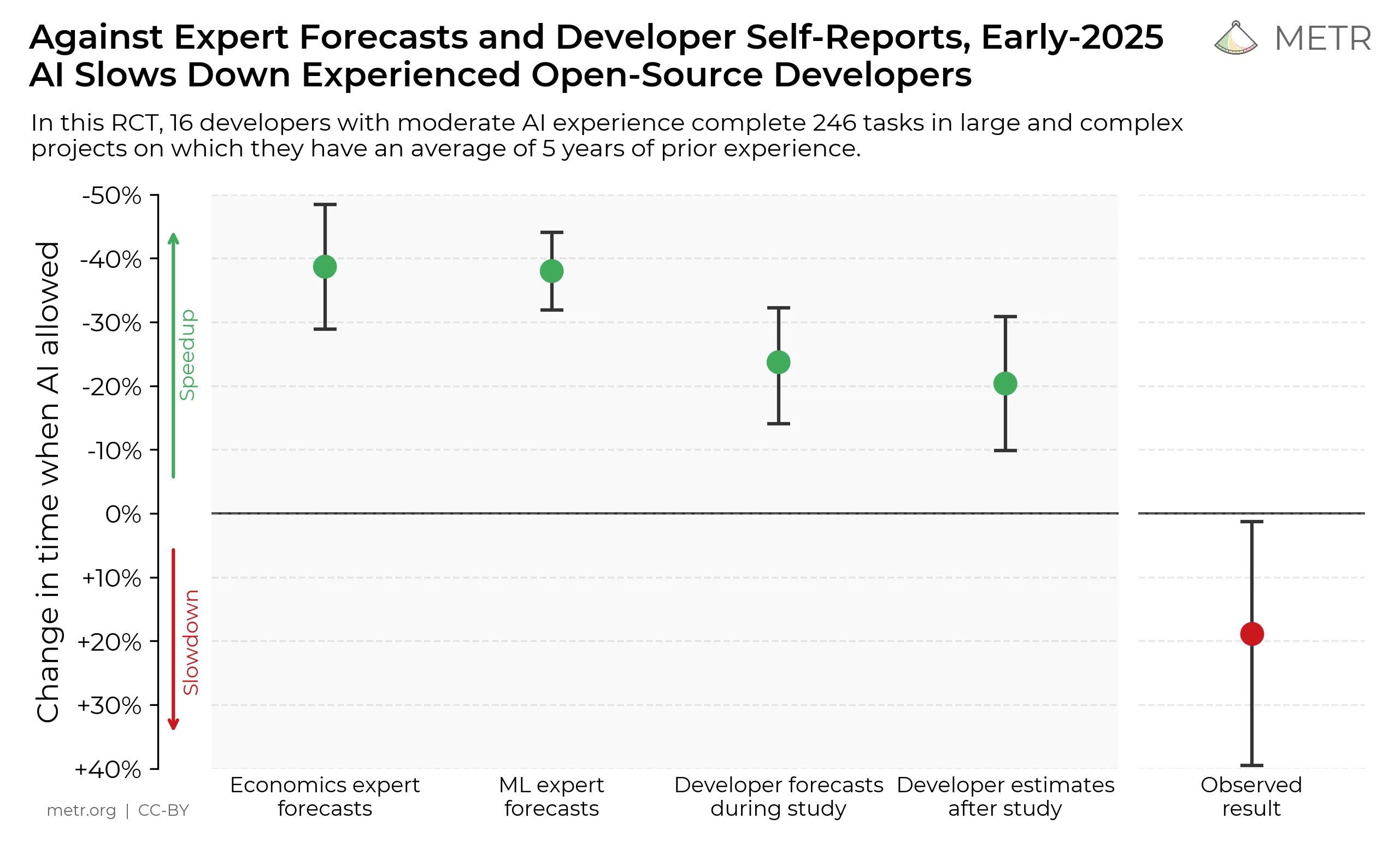

Productivity

AI has led corporations to pile on more and more work on to the employees they currently have due to perceived gains in productivity.

Data has shown that while employees believe they are more productive when using AI, the inverse is actually true.

Studies done within the software engineering space have actually shown a 20% reduction in productivity even though developers felt more productive when reporting their work later.

Impact on AI Users

The only kind of writing is rewriting

- Ernest Hemingway

The difference in perceived vs actual productivity isn’t where the impact on us ends.

An important way to critically engage with information is through writing. The act of writing forces us to question and back up our assumptions while also enabling us to experiment with and develop our ideas.

We’ve taken to AI as a tool to offload all our writing and code, and therefore removed ourselves from the thinking process. Studies have show that this has led to sharp declines in the critical thinking skills of LLM users.

How will we solve the problems we’re going to be faced with in the future once we’ve completely lost the ability to engage with them - and what happens when our ability to think depends on a $20/month subscription?

Impact on Interactions

We’ve descended into a world where people send their AI assistants to meetings to take AI notes so they can send you AI feedback after the meeting (yes, this happened).

AI generated content doesn’t help anyone. I’m speaking to you because I want to hear what you have to say. We need to stop reducing people down to simple content generation machines. Your experience and perspective is important - it’s why we’re here in the first place.

Impact on Society

The better the crap, the longer time and the more energy we have to spend on the report until we close it. A crap report does not help the project at all. It instead takes away developer time and energy from something productive.

- Daniel Stenberg - Curl maintainer

The word “workslop” has been tossed around lately. It refers to work that’s produced and shared that creates even more work for the person receiving it.

When interacting with individuals, I think these problems are solvable - we can work with people or simply ignore automated feedback.

Open source maintainers however, don’t quite have the same flexibility. Daniel Stenberg - maintainer of curl - wrote about how the curl security team has been flooded with AI bug reports that they need to spend time evaluating in order to prevent potential security issues which wastes huge amounts of time and resources.

The AI Utopia

Beyond these factors, society seems to hold the sentiment that through the integration of these technologies in our daily lives we’ll somehow reach this Wall-E type Utopia where all we do is consume infinite amounts of snacks and media.

This couldn’t be further from the truth. Even if we do reach a point where AI is able to do all our jobs, why would you be paid to do nothing? The promise of these corporations is that they’ll increase profits and decrease the number of people needed to do any given job - ideally to zero.

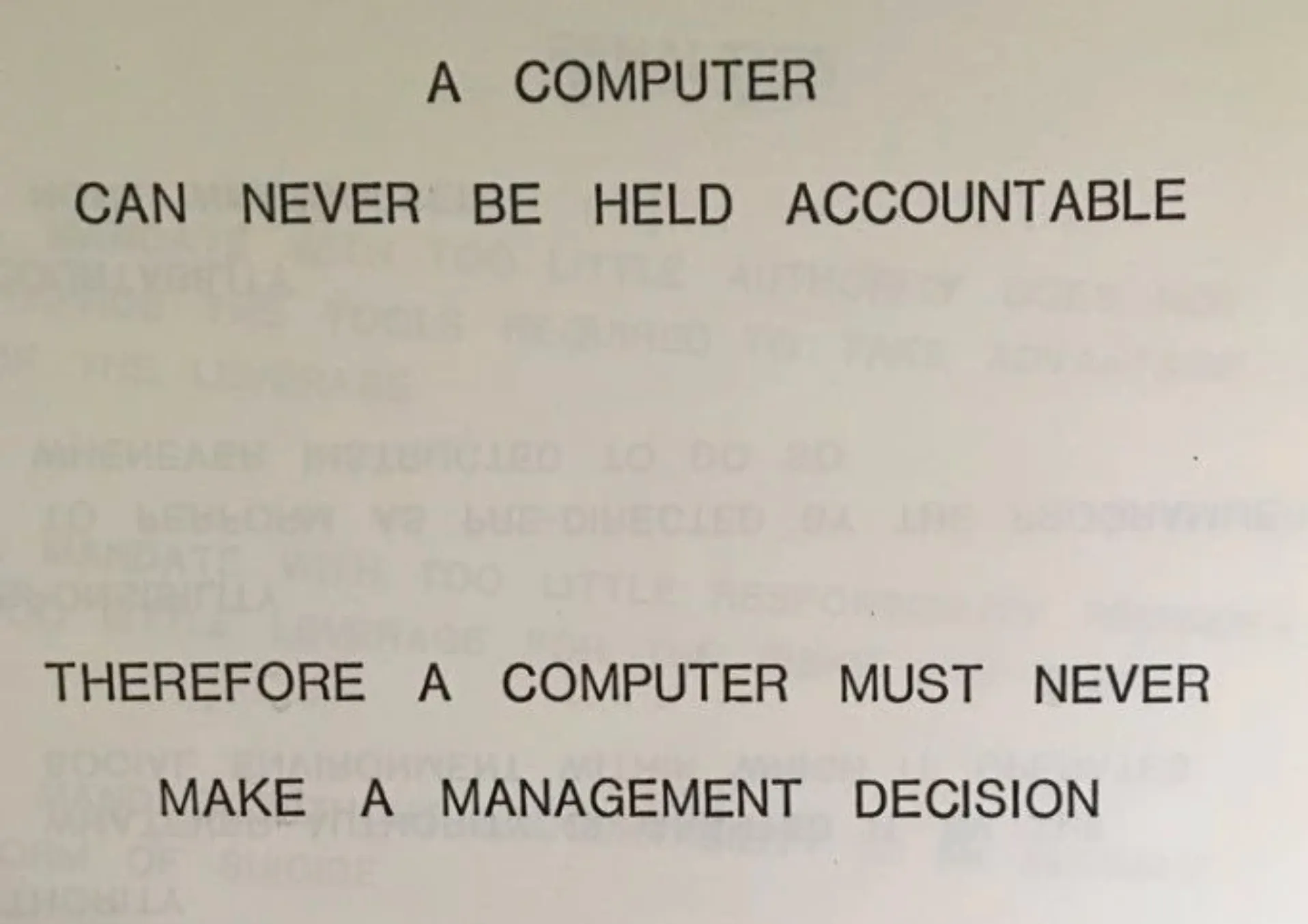

Accountability

AI is constantly being forced into the workspace by people who don’t seem to understand the impact of the work we do.

What happens when an LLM adds a security bug into my system? What happens when my manager told me to let Copilot write the tests? What happens when my senior engineer approves the PR because ChatGPT said “LGTM”?

Who is held accountable when these systems blow up in our faces? And how do we protect ourselves and others from the harm it will inevitably create?

Moving Forward

Everyone deserves the right to automate tedious things in their lives with a computer. They shouldn’t have to learn programming in order to do that.

- Sam Wilson - Not Vibe Coding

Technology build on stolen work that’s filled with biases is a tough beast to tame. The tech companies keep insisting that “this technology is here to stay” because they keep filling every spare kilobyte with it.

Perhaps the best we can do in the face of AI is use it to empower people that need it. Enable people to improve their circumstances and build something that helps us grow as society.

We need to think about how we can use these spoils of capitalism in ways that enable us to dismantle it’s harms and dig us out of hole it’s pushed us into.

That doesn’t mean stealing work from artists - but perhaps it can mean bringing education to people. It doesn’t mean skipping meetings, but perhaps it can mean doing more meaningful and productive work.

A Closing Thought

Our job isn’t to deliver features. It’s to solve problems.

Something Funny

- The original font used in the anti piracy ad was in itself pirated

- The original website

piracyisacrime.comnow redirects you to a parody of the ad from an episode of the IT crowd

END

References

References and further reading:

- Copyright basics - University of Minnesota

- Fair Use or Foul Play? AI, Copyright Law, and the Coming Legislative Reckoning

- You wouldn’t steal a car - Wikipedia

- StableDiffusion Bias Explorer

- Sasha Luccioni

- AI generates covertly racist decisions about people based on their dialect

- The hidden cost of using AI at work - Harvard Business Review

- Spotify’s Audio Identification Patent

- Kroger Asked About Surge Pricing and Facial Recognition at Grocery Stores

- Death by a thousand slops - Daniel Stenberg

- They Asked an A.I. Chatbot Questions. The Answers Sent Them Spiraling.

- Simon Willison - Not Vibe Coding

- Good Writing is Rewriting

- People Are Becoming Obsessed with ChatGPT and Spiraling Into Severe Delusions

- Reddit - The heart of the internet

- No AI Moratorium for Now, but What Comes Next?: Quarles Law Firm, Attorneys, Lawyers

- Teachers Are Not OK

- Enhance Your Writing with AI in Notepad - Microsoft Support

- Data Centers Are Building Their Own Gas Power Plants in Texas - Inside Climate News

- Psychologist Says AI Is Causing Never-Before-Seen Types of Mental Disorder

- Software is evolving backwards - YouTube

- The NIH Is Capping Research Proposals Because It’s Overwhelmed by AI Submissions

- American Schools Were Deeply Unprepared for ChatGPT, Public Records Show

- Reddit - The heart of the internet

- Eye-Popping Electric Bills Come Due as Price of AI Revolu… - Newsweek

- Quote by Pascal Mercier: “We leave something of ourselves behind when we …”

- AI in Newspapers. How Did This Happen? - The Atlantic

- Man Falls Into AI Psychosis, Kills His Mother and Himself

- Measuring the Impact of Early-2025 AI on Experienced Open-Source Developer Productivity - METR

- https://www.itbrew.com/stories/2025/01/06/devs-warn-ai-generated-inaccurate-bug-reports-are-slamming-open-source-projects

- The I in LLM stands for intelligence | daniel.haxx.se

- https://www.seangoedecke.com/ai-sycophancy/

- Chatbot psychosis - Wikipedia

- AI Is Replacing Women’s Jobs Specifically

- https://www.reuters.com/markets/carbon/global-data-center-industry-emit-25-billion-tons-co2-through-2030-morgan-stanley-2024-09-03/

- AI data centres spark fresh fears over future UK water shortages - UKAI

- Amazon Programmers Say What Happened After Turn to AI Was Dark

- Big Tech’s AI Endgame Is Coming Into Focus - The Atlantic

- People Are Being Involuntarily Committed, Jailed After Spiraling Into “ChatGPT Psychosis”

- https://piccalil.li/blog/are-peoples-bosses-really-making-them-use-ai/

- GPT-5 Is Doing Something Absolutely Bizarre

- Introducing pay per crawl: Enabling content owners to charge AI crawlers for access

- ChatGPT May Be Eroding Critical Thinking Skills, According to a New MIT Study

- Who Is OpenAI’s Sam Altman? Meet the Oppenheimer of Our Age

- A computer can never be held accountable

- Increased AI use linked to eroding critical thinking skills

- https://arxiv.org/pdf/2506.08872v1

- Timbaland Introduces New AI Artist

- Rampant AI Cheating Is Ruining Education Alarmingly Fast

- Microsoft wants to radically change the way you surf the web | The Star

- Timbaland’s new AI artist

- Moratoriam on AI regulation

- Comments on “Everyone is Cheating Their Way Through College”

- TikTok - Deleted

- Comments on “Teachers are Not Okay”

- R.F. Kuang on the importance of literature

- The future is already here, it’s just not evenly distributed

- Comments on “Everyone is Cheating Their Way Through College”

- AI generated, baby targeted content

- Environmental impact of AI in South Memphis

- Importance of empathy in healthcare

- Skit - AI not being able to do anything we actually want it to

- AI and Global Warming

- Skit - The reason we want

- The AI Utopia

- AI workslop is destroying productivity